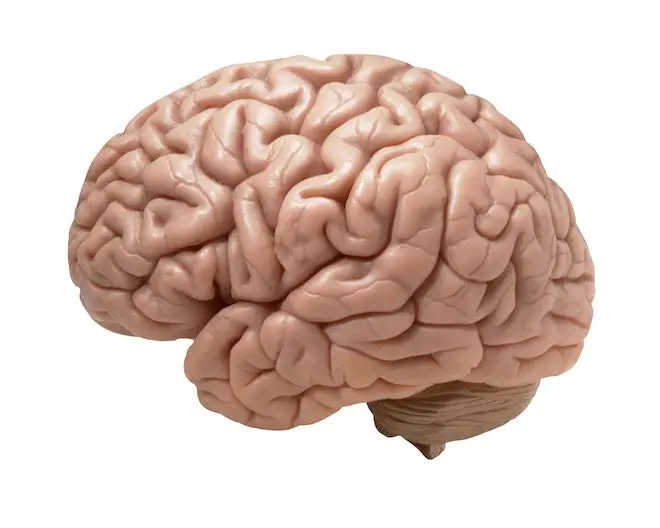

Our brain signals are a window into our souls. More broadly, brain-computer interfaces that read those signals and accompanying algorithms that process the signals are the windows. Researchers at Columbia University have been working on trying to understand what our brains are thinking about, and in particular interpreting the signals produced by the auditory cortex into coherent speech.

The researchers have been using intracranial encephalography (iEEG), also known as electrocorticography (ECoG), which involves placing an implant directly onto the surface of the brain, to read the signals. Special algorithms, based on deep neural network techniques, were used to process the input into fairly intelligible speech.

Because one can’t perform such experiments on volunteers, the researchers worked with epilepsy patients going in for surgery. These patients were already having their brains accessed by craniotomy, which provided an opportunity to test the technology without having to do additional surgeries.

Discover The World's MOST COMPREHENSIVE Mental Health Assessment Platform

Efficiently assess your patients for 80+ possible conditions with a single dynamic, intuitive mental health assessment. As low as $12 per patient per year.

“We found that people could understand and repeat the sounds about 75% of the time, which is well above and beyond any previous attempts,” in a statement said Dr. Nima Mesgarani, senior author of a paper appearing in journal Scientific Reports. “The sensitive vocoder and powerful neural networks represented the sounds the patients had originally listened to with surprising accuracy.”

Here’s an audio reconstruction of one individual counting off numbers, derived purely from that person’s brain waves: